Matrix Factorization: The Simplest Deep Explanation You Will Ever Read

AI Engineer specializing in LLMs, Generative AI, Computer Vision, and on-device ML. I build practical AI systems—from training models from scratch to deploying optimized solutions on real hardware. I write about LLM internals, model compression, Vision Transformers, CoreML, and end-to-end AI engineering. Currently building DeepGuard (on-device deepfake detection).

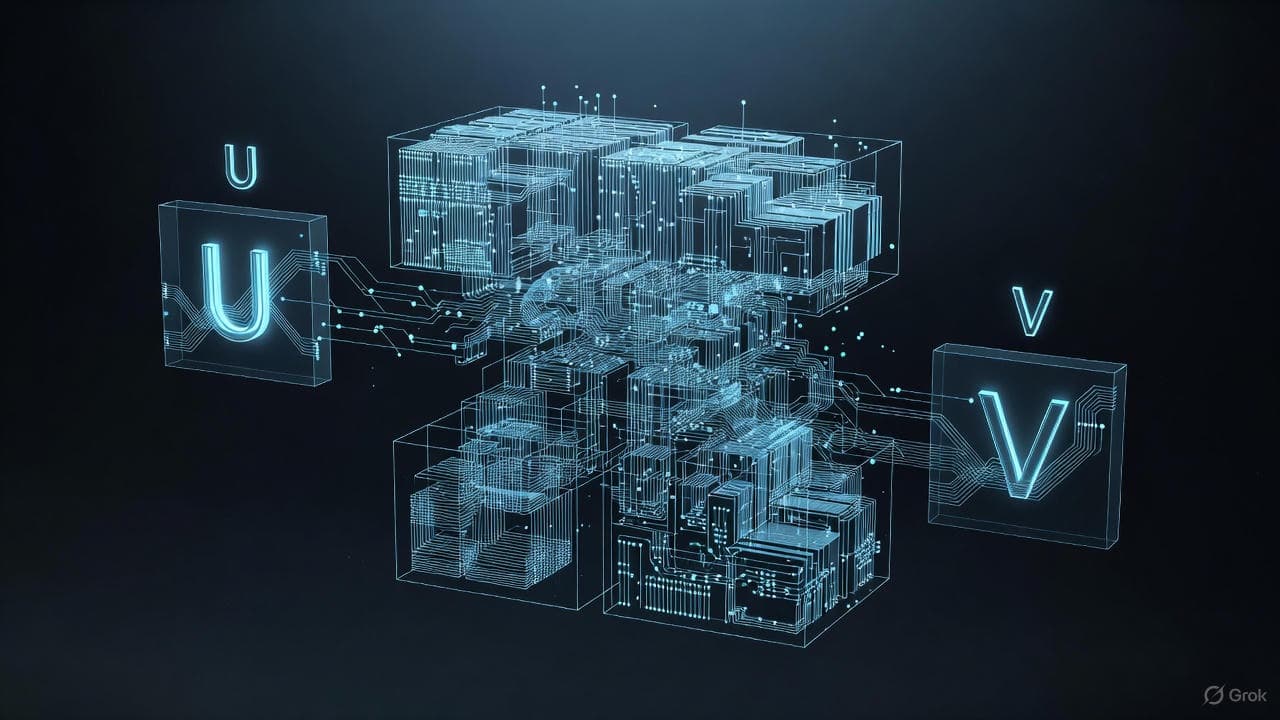

In notation, we write:

A ≈ U × Vᵀ

But what does this really mean? And why do major AI systems like Netflix, Spotify, Amazon, TikTok, PCA, and SVD rely on it?

Let’s break it down clearly.

1. What Matrix Factorization Actually Means

A matrix is just a big table of numbers: ratings, pixels, embeddings, features, anything.

Matrix factorization says:

- The data contains hidden patterns

- These patterns are much fewer than the raw dimensions

- We can represent the big matrix using two smaller matrices

This is called low-rank structure.

Example:

- A is a large m × n matrix

- Choose hidden dimension k (much smaller)

We factorize:

U is m × k V is n × k

Reconstruction:

A ≈ U × Vᵀ

Storage reduces from:

m × n → k(m + n)

A massive compression.

2. A Simple Real-World Analogy: Movie Ratings

Imagine a large (10,000 × 1,000) user-movie rating matrix. Most entries are missing.

Matrix factorization assumes:

- Each user has hidden tastes (action, romance, thrill…)

- Each movie has hidden traits (action level, romance level…)

So instead of storing the whole matrix, we store:

- U → user taste vectors

- V → movie trait vectors

To predict a user’s rating for a movie:

Take the dot product of their vectors.

That’s exactly how Netflix works.

3. Why Matrix Factorization Works So Well

A. Real-world data is low-rank Only a few patterns explain most of the variation.

B. It naturally predicts missing values Reconstruction fills them.

C. It denoises Only the strongest patterns remain.

D. It compresses dramatically Used in LLM weight compression.

E. It speeds up models Small matrices → faster operations.

4. The Intuition Behind the Math

Matrix factorization finds:

U and V such that the difference between A and U × Vᵀ is as small as possible.

Error minimized:

|| A − U × Vᵀ ||²

Gradient descent updates:

- rows of U

- rows of V

until the reconstruction error is low.

Think of it like:

- Discover hidden structure

- Store it in U and V

- Rebuild the matrix using those hidden factors

5. One Perfect Example (Small & Clear)

Rating matrix:

M1 M2 M3

U1 5 ? 4

U2 4 2 ?

U3 ? 1 2

Assume 2 hidden factors:

- Action

- Romance

Let U be:

U =

[

0.9 0.2

0.8 0.6

0.1 0.9

]

Let V be:

V =

[

0.7 0.1

0.2 0.8

0.9 0.5

]

Predict rating of User 1 for Movie 2:

User 1 vector: 0.9 , 0.2 Movie 2 vector: 0.2 , 0.8

Dot product:

(0.9 × 0.2) + (0.2 × 0.8) = 0.18 + 0.16 = 0.34

Scaled → roughly 3/5 rating.

This is exactly how recommendation systems work.

6. Practical Implementation (No-Comment Code)

import numpy as np

def matrix_factorization(R, k, steps=5000, lr=0.0002, reg=0.02):

m, n = R.shape

U = np.random.rand(m, k)

V = np.random.rand(n, k)

for _ in range(steps):

for i in range(m):

for j in range(n):

if R[i, j] > 0:

e = R[i, j] - np.dot(U[i], V[j])

U[i] += lr * (e * V[j] - reg * U[i])

V[j] += lr * (e * U[i] - reg * V[j])

return U, V, U @ V.T

R = np.array([

[5, 0, 4],

[4, 2, 0],

[0, 1, 2]

], dtype=float)

U, V, reconstructed = matrix_factorization(R, 2)

print(reconstructed)

This reconstructs the matrix and predicts missing values.

7. Final Summary

Matrix factorization = low-rank structure + hidden pattern discovery + efficient reconstruction.

It powers:

- Recommender systems

- PCA

- SVD

- Topic modeling

- LLM compression

- Image compression

- Value prediction

Author

Karthik Kurra (Gruhesh Sri Sai Karthik Kurra) LinkedIn: https://www.linkedin.com/in/gruhesh-sri-sai-karthik-kurra-178249227/ Portfolio: https://karthik.zynthetix.in